About Parquet.Net

Parquet.Net is a fully managed, safe, extremely fast .NET library to read and ✍write Apache Parquet files designed for .NET world (not a wrapper). Targets .NET 8, .NET 7, .NET 6.0, .NET Core 3.1, .NET Standard 2.1 and .NET Standard 2.0.

Whether you want to build apps for Linux, MacOS, Windows, iOS, Android, Tizen, Xbox, PS4, Raspberry Pi, Samsung TVs or much more, Parquet.Net has you covered.

Why

Parquet is a great format for storing and processing large amounts of data, but it can be tricky to use with .NET. That's why this library is here to help. It's a pure library that doesn't need any external dependencies, and it's super fast - faster than Python and Java, and other C# solutions. It's also native to .NET, so you don't have to deal with any wrappers or adapters that might slow you down or limit your options.

This library is the best option for parquet files in .NET. It has a simple and intuitive API, supports all the parquet features you need, and handles complex scenarios with ease.

Also it:

Has zero dependencies - pure library that just works.

Really fast. Faster than Python and Java, and alternative C# implementations out there. It's often even faster than native C++ implementations.

.NET native. Designed to utilise .NET and made for .NET developers, not the other way around.

Not a "wrapper" that forces you to fit in. It's the other way around - forces parquet to fit into .NET.

Quick start

Parquet is designed to handle complex data in bulk. It's column-oriented meaning that data is physically stored in columns rather than rows. This is very important for big data systems if you want to process only a subset of columns - reading just the right columns is extremely efficient.

As a quick start, suppose we have the following data records we'd like to save to parquet:

Timestamp.

Event name.

Meter value.

Or, to translate it to C# terms, this can be expressed as the following class:

Writing data

Let's say you have around a million of events like that to save to a .parquet file. There are three ways to do that with this library, starting from easiest to hardest.

Writing with class serialisation

The first one is the easiest to work with, and the most straightforward. Let's generate those million fake records:

Now, to write these to a file in say /mnt/storage/data.parquet you can use the following line of code:

That's pretty much it! You can customise many things in addition to the magical magic process, but if you are a really lazy person that will do just fine for today.

Writing untyped data

Another way to serialise data is to use Untyped serializer.

Writing with low level API

And finally, the lowest level API is the third method. This is the most performant, most Parquet-resembling way to work with data, but least intuitive and involves some knowledge of Parquet data structures.

First of all, you need schema. Always. Just like in row-based example, schema can be declared in the following way:

Then, data columns need to be prepared for writing. As parquet is column-based format, low level APIs expect that low level column slice of data. I'll just shut up and show you the code:

Important thing to note here - columnX variables represent data in an entire column, all the values in that column independently from other columns. Values in other columns have the same order as well. So we have created three columns with data identical to the two examples above.

Time to write it down:

What's going on?

We are creating output file stream. You can probably use one of the overloads in the next line though. This will be the receiver of parquet data. The stream needs to be writeable and seekable.

ParquetWriteris low-level class and is a root object to start writing from. It mostly performs coordination, check summing and enveloping of other data.Row group is like a data partition inside the file. In this example we have just one, but you can create more if there are too many values that are hard to fit in computer memory.

Three calls to row group writer write out the columns. Note that those are performed sequentially, and in the same order as schema defines them.

Read more on writing here which also includes guides on writing nested types such as lists, maps, and structs.

Reading data

Reading data also has three different approaches, so I'm going to unwrap them here in the same order as above.

Reading with class deserialisation

Provided that you have written the data, or just have some external data with the same structure as above, you can read those by simply doing the following:

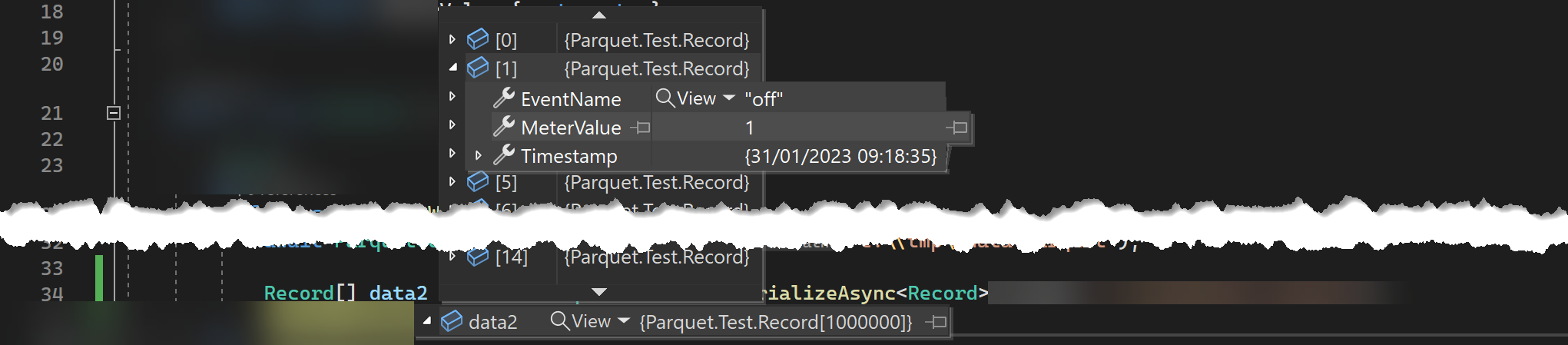

This will give us an array with one million class instances similar to this:

Of course class serialisation has more to it, and you can customise it further than that.

Reading untyped data

Read here for more information on how to read untyped data.

Reading with low level API

And with low level API the reading is even more flexible:

This is what's happening

Create read stream

fs.Create

ParquetReader- root class for read operations.The reader has

RowGroupCountproperty which indicates how many row groups (like partitions) the file contains.Explicitly open row group for reading.

Read each

DataFieldfrom the row group, in the same order as it's declared in the schema.

Choosing the API

If you have a choice, then the choice is easy - use Low Level API. They are the fastest and the most flexible. But what if you for some reason don't have a choice? Then think about this:

Feature | Class Serialisation | Untyped Serializer API | Low Level API |

|---|---|---|---|

Performance | high | very low | very high |

Developer Convenience | C# native | feels like Excel | close to Parquet internals |

Row based access | easy | easy | hard |

Column based access | C# native | hard | easy |